Unfortunately that often goes hand-in-hand with making the query simpler, and I don't know how much control you have over that. What you often want to try and do in these cases is to work towards plan stability, so that no matter what happens (outside of changing the schema), then a good enough plan is always generated. from SQL Server 2005 Books Online, Recompiling Stored Procedures: As a database is changed by such actions as adding indexes or changing data in indexed. To answer why this suddenly changed one night - well there could be updated statistics, or a 'better' plan got pushed out of cache? I can envisage lots of scenarios where 'nothing' has changed, and yet the performance suddenly tanks. The slower plan does not have the early termination, which means that it thinks it has arrived at the best plan for the estimates and statistics that it has - clearly this is not the case as borne out by your experience. (see for more info) It's not unusual for this seemingly negative position to actually deliver you a plan that is good - just because it didn't evaluate every single possibility, doesn't mean it hasn't already got the best plan. We also see that the faster plan has a Reason for early termination: Time Out - which means that the optimiser reached a threshold and had to abandon looking for any further possible plans and go with the best it had so far. The queries used by stored procedures, or triggers, and user-defined functions are optimized only when they. You can also add the WITH RECOMPILE directly to the stored. Unfortunately the plan and query are so complex it is difficult to pinpoint exactly why. The qualified or unqualified name of a stored procedure, trigger, table, view, or user-defined function in. This tells SQL Server to throw away the existing query plan. However in your case the actual performance is improved by this 'wrong' plan, when compared to the 'better' plan. So the faster plan wrongly assumes it is going to be doing less work and so chooses a plan and operators based on that assumption.

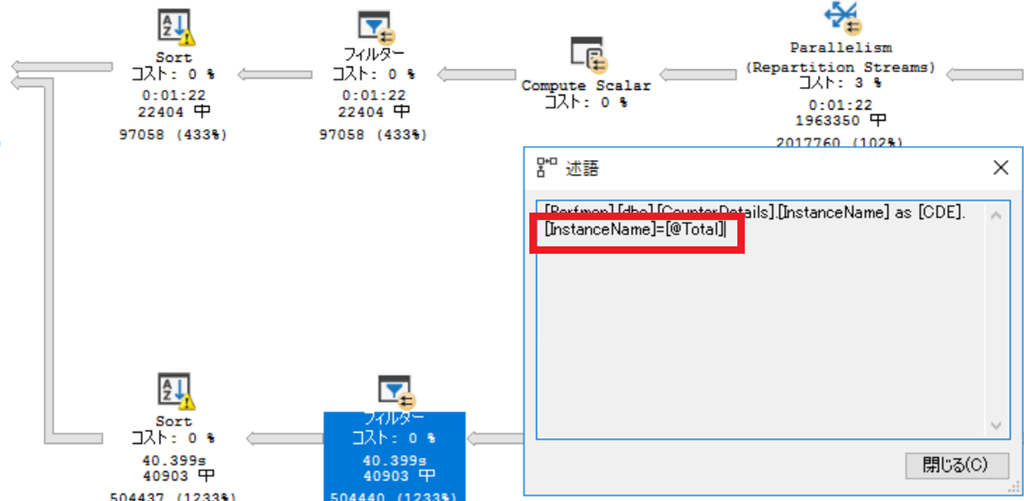

Running on SQL Server Enterprise 2014 SP 2 CU 12. This extreme range of behaviour seems truly peculiar. I would consider the function to be of average complexity. Conversely the '22 hour' plan (with option recompile) estimates a higher row count of 8,659. I have an SQL function which takes 22 hours to run when the option (recompile) is added and 42 minutes when it is left out - so the direct opposite of what I would expect. Looking at the plans (and yes they are fairly complex!) we can see that the '42 min' plan (without option recompile) has an estimated output row count of 2 rows, whereas the actual output is 21k. I think the first point I would make is that option(recompile) is not necessarily an optimisation, I'd view it as a 'change of behaviour', which can affect performance either way.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed